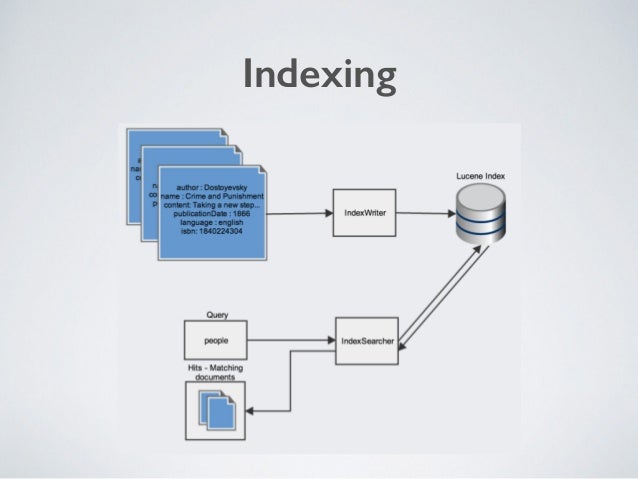

While both products are document-oriented search engines, Solr has always been more focused on enterprise-directed text searches with advanced information retrieval (IR). It supports data ingestion from multiple sources using the Beats family (lightweight data shippers available in the ELK Stack) and Logstash. To ingest CSV-based data in a collection named testcollection, for example, you just need to use the following command:Įlasticsearch, on the other hand, is completely JSON-based. Solr ships with a simple command line post. With native support for the Apache Tika library, it supports extraction and indexing from over one thousand file types. Solr uses request handlers to ingest data from XML files, CSV files, databases, Microsoft Word documents, and PDFs. Data Sourcesīoth tools support a wide range of data sources. Additionally, Elasticsearch has native DSL support while Solr has a robust Standard Query Parser that aligns to Lucene syntax. But, since differences exist in sharding and replication (among other features), there are also differences in their files and architectures. Indexing and Searchingīoth Solr and Elasticsearch write indexes in Lucene. Solr supports XML-based configuration files. The latest version of Elasticsearch (version 7.7.1, released in June 2020) has a compressed size of 314.5MB, whereas Solr (version 8.5.2, released in May 2020) ships at 191.7MB.Ĭonfiguration files in Elasticsearch are written in YML format. Both files are located inside the bin directory of the Solr installation.Įlasticsearch is easy to install and configure, but it’s quite a bit heavier than Solr. This setting can be changed in either the solr script file or the solr.in.cmd file. This can be changed in the jvm.options file inside the config directory.īy default, Solr needs at least 512MB of HEAP memory to allocate to instances. Java is the primary prerequisite for installing both of these engines, but the default Elasticsearch configuration requires 1GB of HEAP memory. Solr Popularity (Source: DB-Engines) Installation and Configuration Solr had gained popularity in the first ten years of its existence, but Elasticsearch has been the most popular search engine since 2016.įigure 1: DB-Engines Ranking-Elasticsearch vs. Relative PopularityĪccording to DB-Engines, which ranks database management systems and search engines according to their popularity, Elasticsearch is ranked number one, and Solr is ranked number three. Some of its primary features include distributed full-text distributed search, high availability, powerful query DSL, multitenancy, Geo Search, and horizontal scaling. This tool is much younger than Solr, but it has gained a lot of popularity because of its feature-rich use cases. Elasticsearch is completely based on JSON and is suitable for time series and NoSQL data. It extends Lucene’s powerful indexing and search functionalities using RESTful APIs, and it archives the distribution of data on multiple servers using the index and shards concept. About ElasticsearchĮlasticsearch is also an open-source search engine built on top of Apache Lucene, as the rest of the ELK Stack, including Logstash and Kibana. Solr offers powerful features such as distributed full-text search, faceting, near real-time indexing, high availability, NoSQL features, integrations with big data tools such as Hadoop, and the ability to handle rich-text documents such as Word and PDF. It has been around for almost a decade and a half, making it a mature product with a broad user community. About Apache SolrĪpache Solr is an open-source search server built on top of Lucene that provides all of Lucene’s search capabilities through HTTP requests. However, they differ significantly in terms of deployment, scalability, query language, and many other functionalities. This blog post will pit Solr vs Elasticsearch, two of the most popular open source search engines whose fortunes over the years have gone in different directions.īoth of them are built on top of Apache Lucene, so the features they support are very similar. Performing searches on terabytes and petabytes of data can be challenging when speed, performance, and high availability are core requirements. Searches are integral parts of any application.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed